Decoding EEG Brain Signals using Recurrent Neural Networks

Abstract

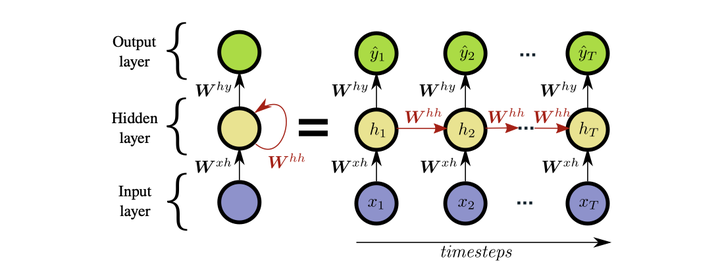

Brain-computer interfaces (BCIs) based on electroencephalography (EEG) enable direct communication between humans and computers by analyzing brain activity. Specifically, modern BCIs are capable of translating imagined movements into real- life control signals, e.g., to actuate a robotic arm or prosthesis. This type of BCI is already used in rehabilitation robotics and provides an alternative communication channel for patients suffering from amyotrophic lateral sclerosis or severe spinal cord injury. Current state-of-the-art methods are based on traditional machine learning, which involves the identification of discriminative features. This is a challenging task due to the non-linear, non-stationary and time-varying characteristics of EEG signals, which led to stagnating progress in classification performance. Deep learning alleviates the efforts for manual feature engineering through end-to-end decoding, which potentially presents a promising solution for EEG signal classification. This thesis investigates how deep learning models such as long short-term memory (LSTM) and convolutional neural networks (CNN) perform on the task of decoding motor imagery movements from EEG signals. For this task, both a LSTM and a CNN model are developed using the latest advances in deep learning, such as batch normalization, dropout and cropped training strategies for data augmentation. Evaluation is performed on a novel EEG dataset consisting of 20 healthy subjects. The LSTM model reaches the state-of-the-art performance of support vector ma- chines with a cross-validated accuracy of 66.20%. The CNN model that employs a time-frequency transformation in its first layer outperforms the LSTM model and reaches a mean accuracy of 84.23%. This shows that deep learning approaches de- liver competitive performance without the need for hand-crafted features, enabling end-to-end classification.