Validating Deep Neural Networks for Online Decoding of Motor Imagery Movements from EEG Signals

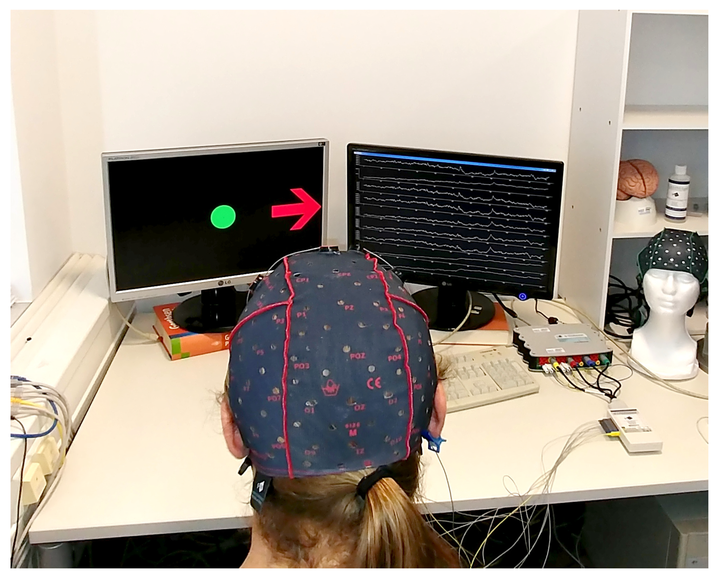

Image taken at TU Munich

Image taken at TU MunichAbstract

Non-invasive, electroencephalography (EEG)-based brain-computer interfaces (BCIs) on motor imagery movements translate the subject’s motor intention into control signals through classifying the EEG patterns caused by different imagination tasks, e.g., hand movements. This type of BCI has been widely studied and used as an alternative mode of communication and environmental control for disabled patients, such as those suffering from a brainstem stroke or a spinal cord injury (SCI). Notwithstanding the success of traditional machine learning methods in classifying EEG signals, these methods still rely on hand-crafted features. The extraction of such features is a difficult task due to the high non-stationarity of EEG signals, which is a major cause by the stagnating progress in classification performance. Remarkable advances in deep learning methods allow end-to-end learning without any feature engineering, which could benefit BCI motor imagery applications. We developed three deep learning models: (1) A long short-term memory (LSTM); (2) a spectrogram-based convolutional neural network model (CNN); and (3) a recurrent convolutional neural network (RCNN), for decoding motor imagery movements directly from raw EEG signals without (any manual) feature engineering. Results were evaluated on our own publicly available, EEG data collected from 20 subjects and on an existing dataset known as 2b EEG dataset from “BCI Competition IV”. Overall, better classification performance was achieved with deep learning models compared to state-of-the art machine learning techniques, which could chart a route ahead for developing new robust techniques for EEG signal decoding. We underpin this point by demonstrating the successful real-time control of a robotic arm using our CNN based BCI.